DatAasee Software Documentation

Version: 0.9

The metadata-lake DatAasee centralizes bibliographic data as well as metadata of research data from distributed sources to increase metadata availability and research data discoverability, hence supporting FAIR research in university libraries, research libraries, academic libraries, or scientific libraries.

DatAasee is developed for and by the University and State Library of Münster, and available openly under a free and open-source license.

Table of Contents:

- Explanations (understanding-oriented)

- How-Tos (goal-oriented)

- References (information-oriented)

- Tutorials (learning-oriented)

- Appendix (development-oriented)

Selected Subsections:

1. Explanations

In this section in-depth explanations and backgrounds are collected.

Overview:

About

- What problem is DatAasee solving?

- DatAasee provides a single, unified, and uniform access to distributed sources of bibliographic and research metadata.

- What does DatAasee do?

- DatAasee centralizes, indexes, and serves metadata after ingesting, partially transforming, and interconnecting it.

- What is DatAasee technically?

- DatAasee is a metadata-lake.

- What is a Metadata-Lake?

- A metadata-lake (a.k.a. metalake) is a data-lake restricted to metadata data.

- What is a Data-Lake?

- A data-lake is a data architecture for structured, semi-structured and unstructured data.

- How does a data-lake differ from a database?

- A data-lake includes a database, but requires further components to import and export data.

- How does a data-lake differ from a data warehouse?

- How is data in a data-lake organized?

- A data-lake includes a metadata catalog that stores data locations, their metadata, and transformations.

- What makes a metadata-lake special?

- In a metadata-lake, the data-lake and metadata catalog coincide; this implies incoming metadata records are partially transformed (cf. EtLT) to hydrate the catalog aspect.

- How does a metadata-lake differ from a data catalog?

- A metadata-lake stores textual metadata records, whereas a data catalog typically indexes databases and their structural contents.

- How is a metadata-lake a data-lake?

- The ingested metadata is stored in raw form in the metadata-lake in addition to the partially transformed catalog metadata, and transformations are performed on the raw or catalog metadata upon request.

- How does a metadata-lake relate to a virtual data-lake?

- A metadata-lake can act as a central metadata catalog for a set of distributed data sources and thus define a virtual data-lake, given data locations as part of the metadata.

- How does a metadata-lake relate to data spaces?

- A data space is a set of (meta)data sources, their interrelations, best-effort interpretation, as-needed integration, and a uniform interface for access. In this sense the metadata-lake DatAasee spans a data space.

- How does the DatAasee metadata-lake relate to a search engine?

- DatAasee can conceptually be seen as a metasearch-engine for metadata.

- What is an executive summary of DatAasee?

- DatAasee is a metadata hub for organizations with many different data and metadata sources.

Features

Brackets [ ] mean: under construction.

- Deploy via:

Docker,Podman, [Kubernetes] - Ingest:

DataCite(XML),DC(XML),LIDO(XML),MARC(XML),MODS(XML) - Ingest via:

OAI-PMH(HTTP),S3(HTTP),GET(HTTP),DatAasee(HTTP), [GraphQL(HTTP)] - Search via: filter/facet, full-text, source,

DOI - Query by:

SQL,Cypher,MQL,GraphQL,Redis - Export as:

DataCite(JSON),BibJSON(JSON), [KDSF(JSON)] - Interact via:

JSON-HTTPAPI - Prototype web frontend as template for manual interaction and observation

Design

- Encapsulated as a composition of component containers.

- External access is provided through an HTTP API accepting and responding only in JSON with JSON:API formatting.

- Ingests may happen via compatible (pull) protocols, e.g.

OAI-PMH,S3,HTTP-GET(Ingests are intended as periodic bulk operations, e.g. quarterly or semi-annually) - Only the database component holds permanent state.

- The included frontend is optional as it is exclusively using the HTTP API.

- For more details see the architecture documentation.

Data Model

A graph database is used with a central node (vertex) type (cf. table) named Metadata.

The node properties are based on DataCite metadata schema for the descriptive metadata.

For further information see the schema.

Persistence

Backup and Restore:

- The database component holds all permanent state of the DatAasee service.

- After a successful ingest, a backup is triggered.

- After a successful interconnect, a backup is triggered.

- Outside of ingest and interconnect no data is changed.

- When starting the DatAasee service, the latest backup in the specified backup location is restored.

Security

Secrets:

- Two secrets need to be handled: datalake admin password and database admin password.

- The default datalake admin username is

admin, the password (DL_PASS) can be passed during initial deployment; there is no default password. - The database admin username is

root, the password (DB_PASS) can be passed during initial deployment; there is no default password. - These passwords are handled as secrets by the deploying Compose-file (loaded from an environment variable and provided to containers as a file).

- The database credentials are used by the backend and may also be used for manual database access.

- If the secrets are kept on the host, they need to be protected, see Secret Management.

Database:

- The database server hosts only the

metadatalakedatabase; therootaccount is scoped to this server instance and has no cross-system privileges. - When deployed, the database is only directly accessible through the console from inside the database container.

- During development, the database can be directly accessed via its web studio.

Infrastructure:

- Component containers are custom-built and hardened.

- Only

HTTPandBasic Authenticationare used, as it is assumed thatHTTPSis provided by an operator-provided HTTPS / TLS terminating proxy server. - There is no session data as all interactions are per-request.

Interface:

- HTTP API

GETrequests are read-only idempotent and are unauthenticated. - HTTP API

POSTrequests may change the state of the database and thus require authentication by the datalake admin. - See the DatAasee OpenAPI definition.

2. How-Tos

In this section, brief guides for typical tasks are compiled.

Overview:

- Prerequisite

- Resources

- Using DatAasee

- Production Checklist

- Deploy

- Logs

- Shutdown

- Probe

- Ingest

- Update

- Upgrade

- Reset

- Database Console

- Web Interface (Prototype)

- API Indexing

Prerequisite

The (virtual) machine deploying DatAasee requires docker compose (>=2.37)

on top of docker or podman, see also container engine compatibility.

Resources

The compute and memory resources for DatAasee can be configured via the compose.yaml.

To run, a bare-metal machine or virtual machine requires:

- Minimum: 4 CPU, 8G RAM (a Raspberry Pi 4 would be sufficient)

- Recommended: 8 CPU, 32G RAM

In terms of DatAasee components this breaks down to:

- Database:

- Minimum: 2 CPU, 4G RAM

- Recommended: 4 CPU, 24G RAM

- Backend:

- Minimum: 1 CPU, 2G RAM

- Recommended: 2 CPU, 4G RAM

- Frontend:

- Minimum: 1 CPU, 2G RAM

- Recommended: 2 CPU, 4G RAM

Note, that resource and system requirements depend on load;

especially the database is under heavy load during ingest.

Post ingest, (new) metadata records are interrelated, also causing heavy database loads.

Generally, the database drives the overall performance.

Thus, to improve performance, try first to increase the memory limits (in the compose.yaml)

for the database component (i.e., from 4G to 24G).

NOTE: Practically, the memory required by database roughly corresponds to its size, which is reported on startup (in case a backup is restored), or after completing a backup. As a rough estimate for resource planning expect 6G RAM per million records, and 1G disk per million records for backups.

Using DatAasee

In this section the terms “operator” and “user” are used, where “operator” refers to the party installing, serving and maintaining DatAasee, and “user” refers to the individuals or services reading from DatAasee.

Operator Activities

- Monitoring DatAasee

- Updating DatAasee

- Ingesting from external sources

User Activities

- Schema queries (support)

- Metadata queries (data)

- Custom queries (read-only)

This means the user can only use the GET API endpoints, while the operator typically uses the POST API endpoints.

For details about the HTTP API calls, see the API reference.

NOTE: In the following, a leading space (symbolized by “␣”) is used with commands containing passwords to omit them from the shell history. This is not safe for production, as this mechanism depends on the shell implementation and its configuration. Use this only for local testing; for production, see safer methods for passing secrets.

Production Checklist

- Store and access secrets securely, see Secret Management

- Size database memory limits, see Resources

- Monitor

/readyand/healthprobes, see HTTP API - Put DatAasee behind a TLS termination proxy for

HTTPS, see Infrastructure FAQ - Block or restrict

POSTendpoints for public access via proxy - Disable

/databaseendpoint viaDL_SAFEor block for public access via proxy - Rate limit public endpoints

Deploy

Deploy DatAasee via (Docker) Compose by providing the two secrets:

DL_PASSis for authorizing day-to-day operator actions in the backend, like health checks or ingest;DB_PASSis used by the backend itself, and exceptionally by the operator, to interact with the database directly;

for further details, see the Getting Started tutorial as well as the compose.yaml and the Docker Compose file reference.

WARNING: DatAasee must not be exposed directly to the public Internet! Put it behind a TLS-terminating reverse proxy and block the database endpoint. See compose.proxy.yaml for an example.

$ mkdir -p backup # or: ln -s /path/to/backup/volume backup

$ wget https://raw.githubusercontent.com/ulbmuenster/dataasee/0.9/compose.yaml

$ ␣DL_PASS=password1 DB_PASS=password2 docker compose up -d

NOTE: The backup folder (or mount) needs permissions to read from, and write into by the root (actually by the database container’s user, but root can represent them on the host). Thus, a change of ownership

sudo chown root backupis typically required. For testing purposeschmod o+w backupis fine, but not recommended for production.

NOTE: To further customize your deploy, use environment variables. The runtime configuration environment variables can be stored in an

.envfile.

WARNING: Do not put secrets into the

.envfile!

Logs

$ docker compose logs backend --no-log-prefix

NOTE: The default Docker logging driver

localis used.

Shutdown

$ docker compose down

NOTE: No (database) backup is automatically triggered on shutdown!

Probe

For further details see /ready endpoint API reference entry (assuming this is done on the hosting machine).

wget -SqO- http://localhost:8343/api/v1/ready

Ingest

For further details see /ingest endpoint API reference entry (assuming this is done on the hosting machine).

$ wget -qO- http://localhost:8343/api/v1/ingest --user admin --ask-password --post-data \

'{"source":"https://example.invalid/oai","method":"oai-pmh","format":"mods","rights":"CC0","steward":"steward@example.invalid"}'

NOTE: This is an async action, progress and completion is only noted in the (backend) logs. If the backend is ingesting can be checked via the

/ingestendpoint or the/healthendpoint.

Update

$ docker compose pull

$ ␣DL_PASS=password1 DB_PASS=password2 docker compose up -d

NOTE: “Update” means: if available, new images of the same DatAasee version but with updated dependencies will be installed, whereas “Upgrade” means: a new version of DatAasee will be installed.

NOTE: An update terminates an ongoing ingest or interconnect process.

Upgrade

$ docker compose down

$ ␣DL_PASS=password1 DB_PASS=password2 DL_VERSION=0.9 docker compose up -d

NOTE:

docker compose restartcannot be used to upgrade because environment variables (such asDL_VERSION) are not updated when using restart.

NOTE: Make sure to put the

DL_VERSIONvariable into an.envfile for a permanent upgrade or edit the compose file accordingly.

Reset

$ docker compose restart

NOTE: A reset may be necessary if the backend crashes during an ingest.

Database Console

$ ␣docker exec -it dataasee-database-1 bin/console.sh 'CONNECT remote:localhost/metadatalake root <db_pass>'

NOTE: This is for emergency use only and not needed in day-to-day operations.

Web Interface (Prototype)

All frontend pages show a menu on the left side listing all other pages, as well as an indicator if the backend server is ready.

NOTE: The default port for the web frontend is

8000, e.g.http://localhost:8000, and can be adapted in thecompose.yaml.

The home page has a full-text search input.

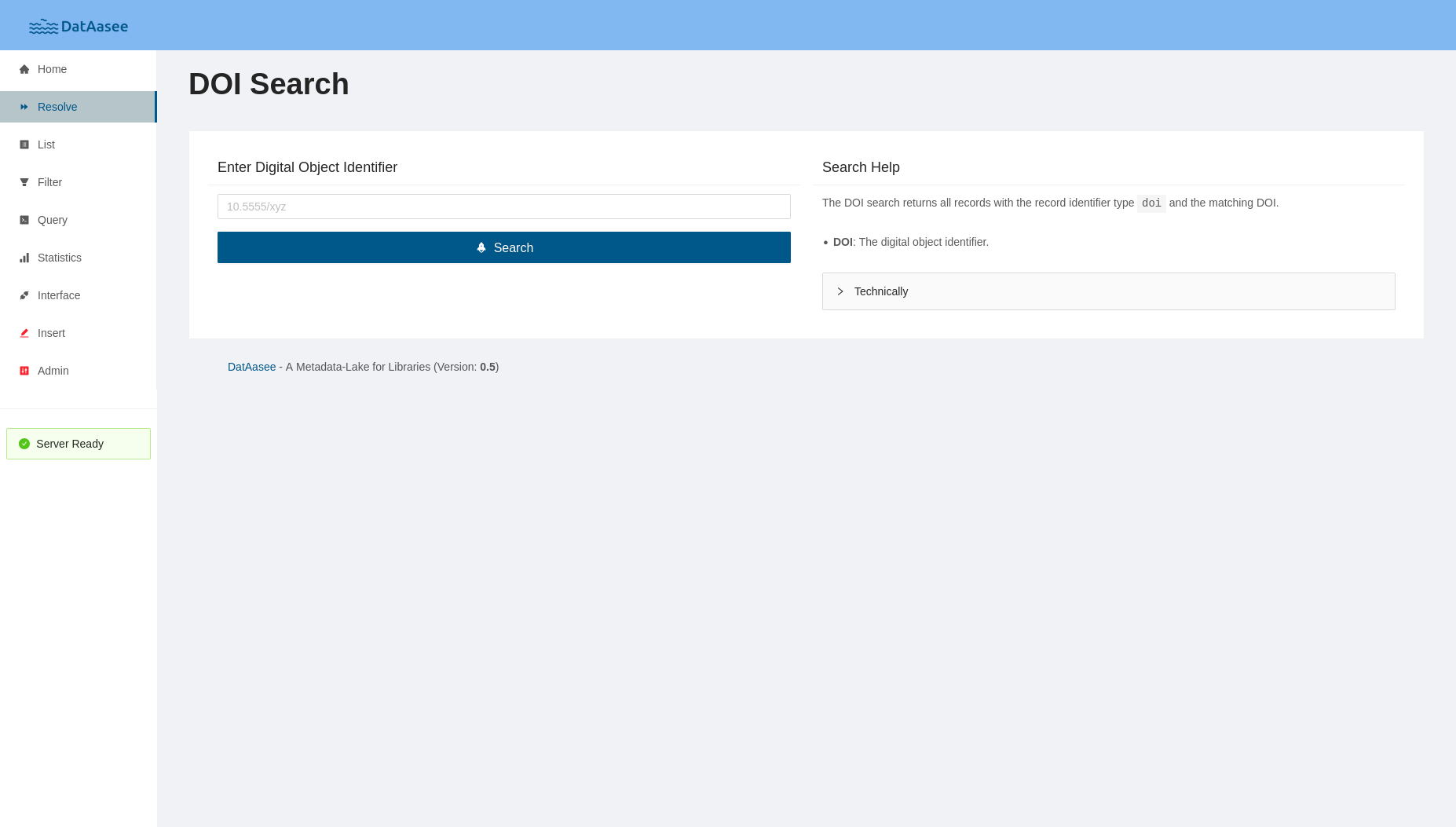

The “DOI Search” page takes a DOI and returns the associated metadata record.

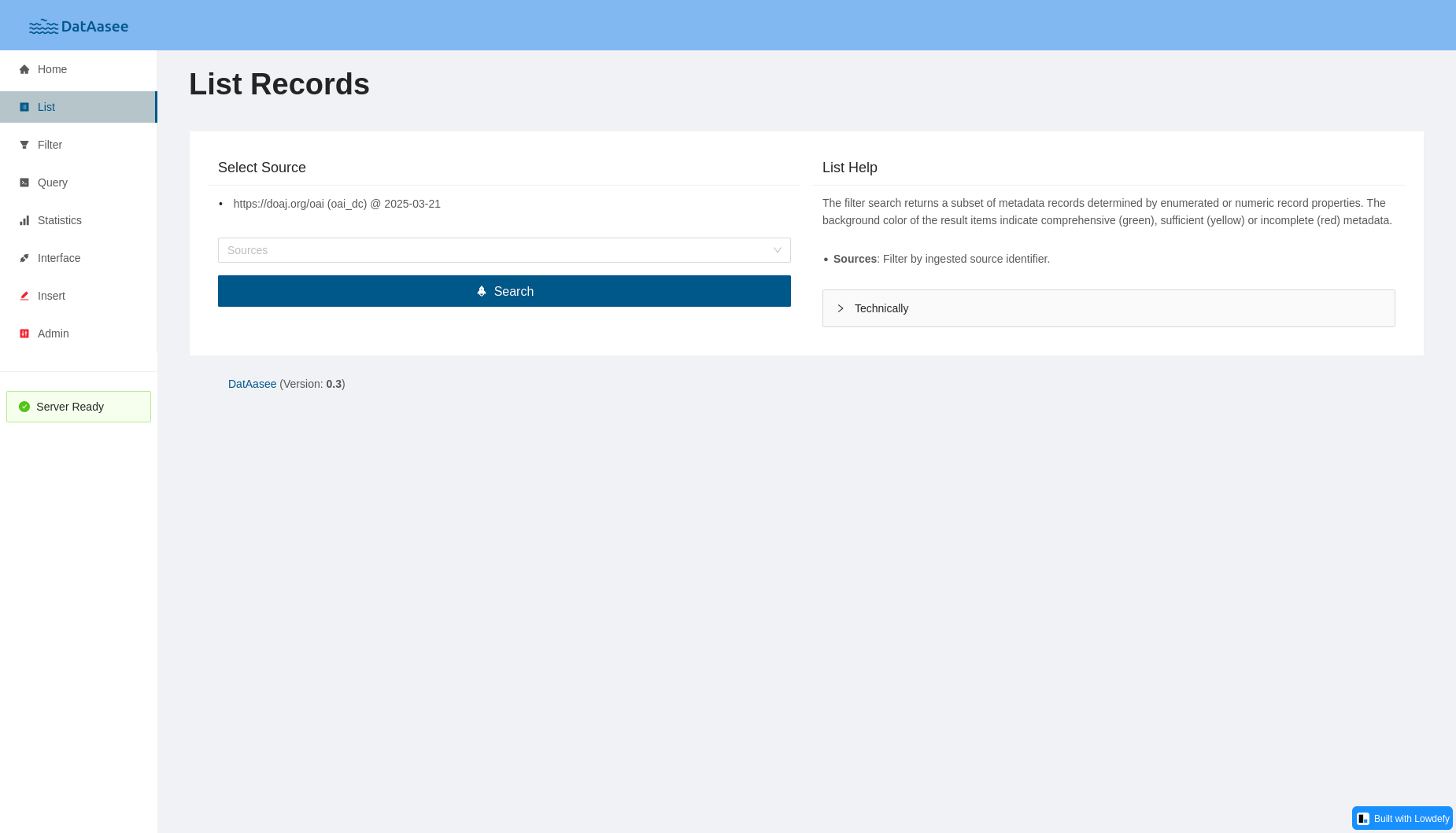

The “List Records” page allows to list all metadata records from a selected source.

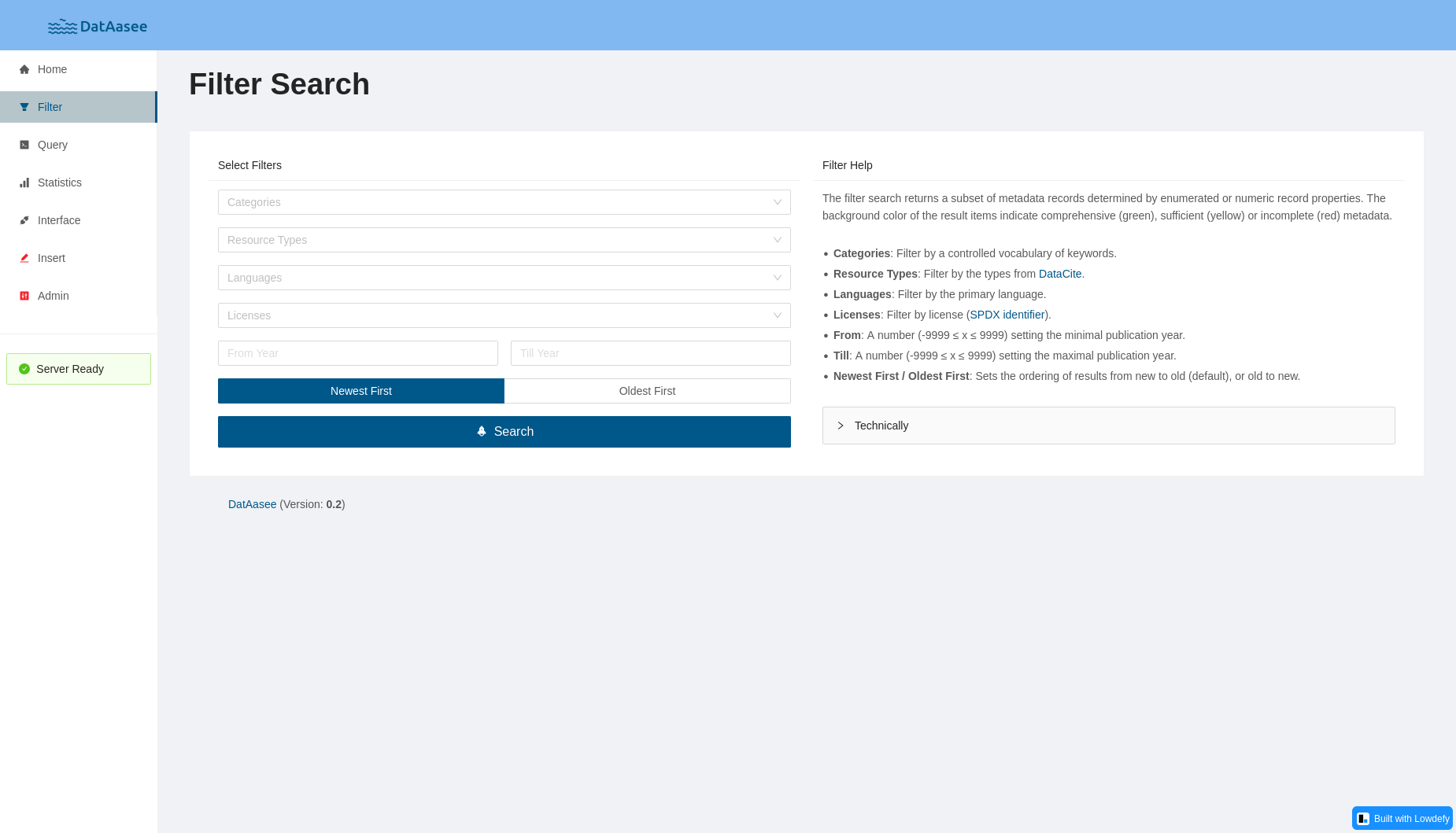

The “Filter Search” page allows to filter for a subset of metadata records by categories, resource types, languages, licenses, or publication year.

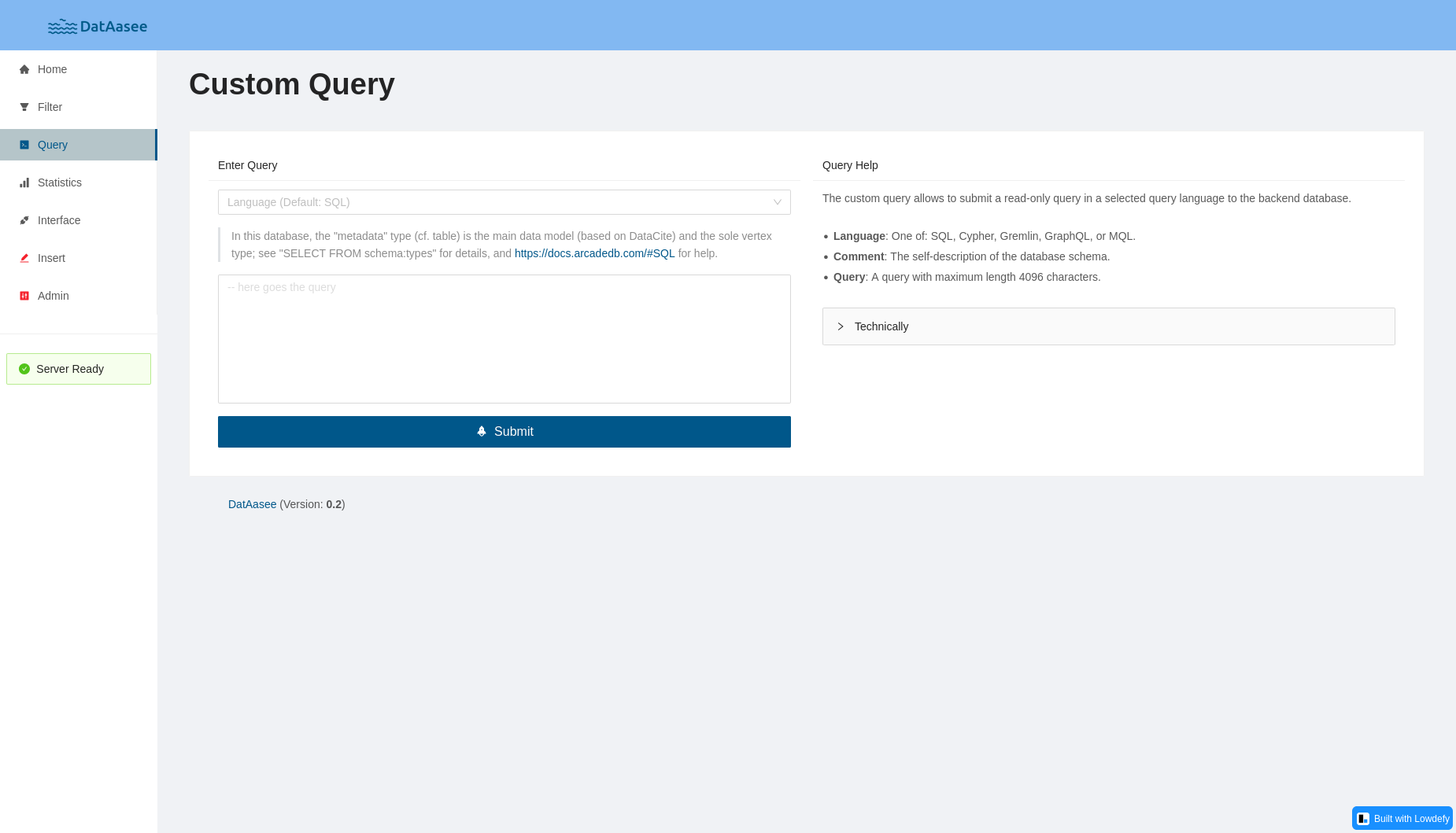

The “Custom Query” page allows to enter a query via sql, opencypher, mql, graphql, or redis.

The “Statistics Overview” page shows top-10 bar graphs for number of views, publication years, and keywords, as well as top-100 pie charts for resource types, categories, licenses, subjects, languages, and metadata schemas.

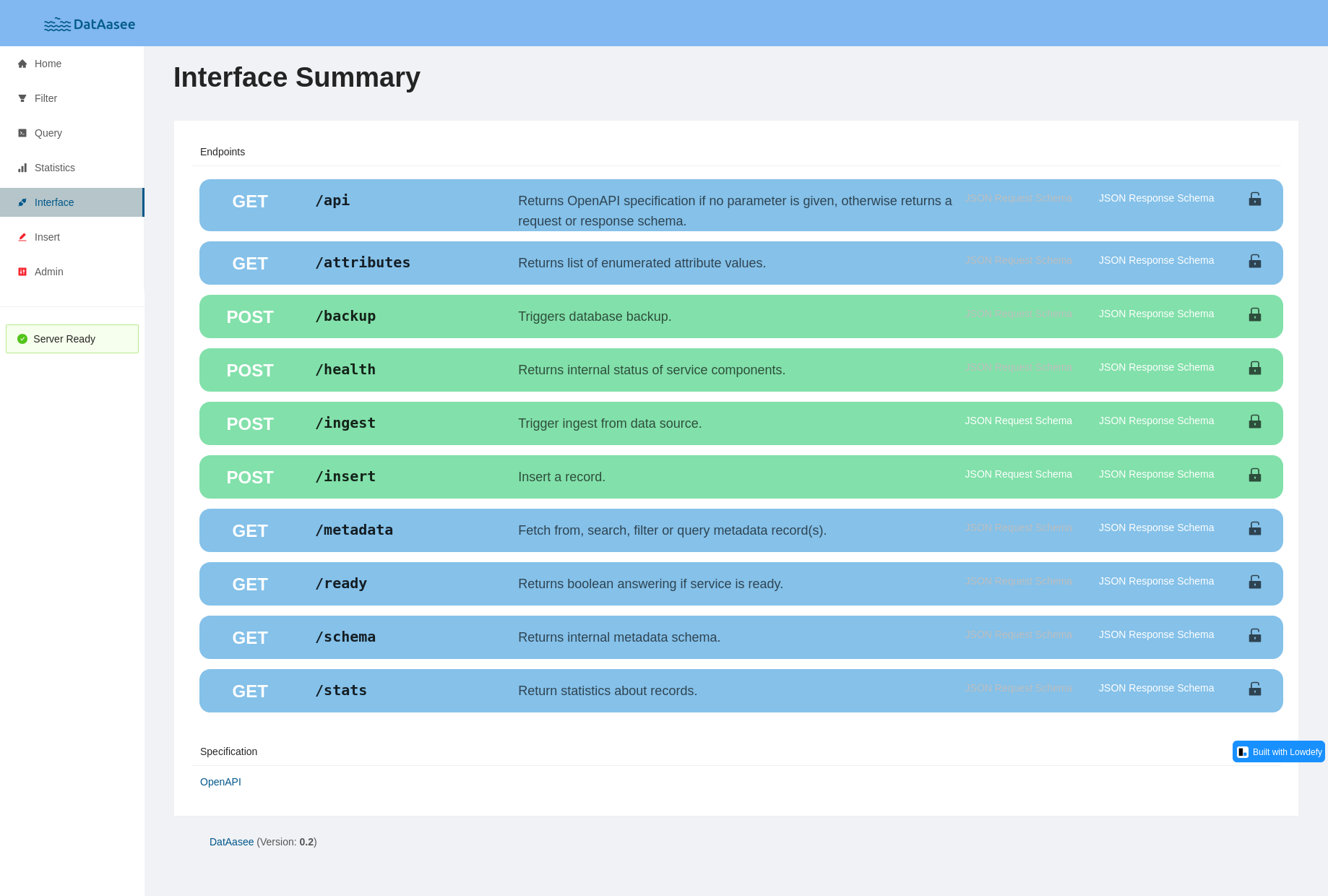

The “Interface Summary” page lists the backend API endpoints and provides links to parameter, request, and response schemas.

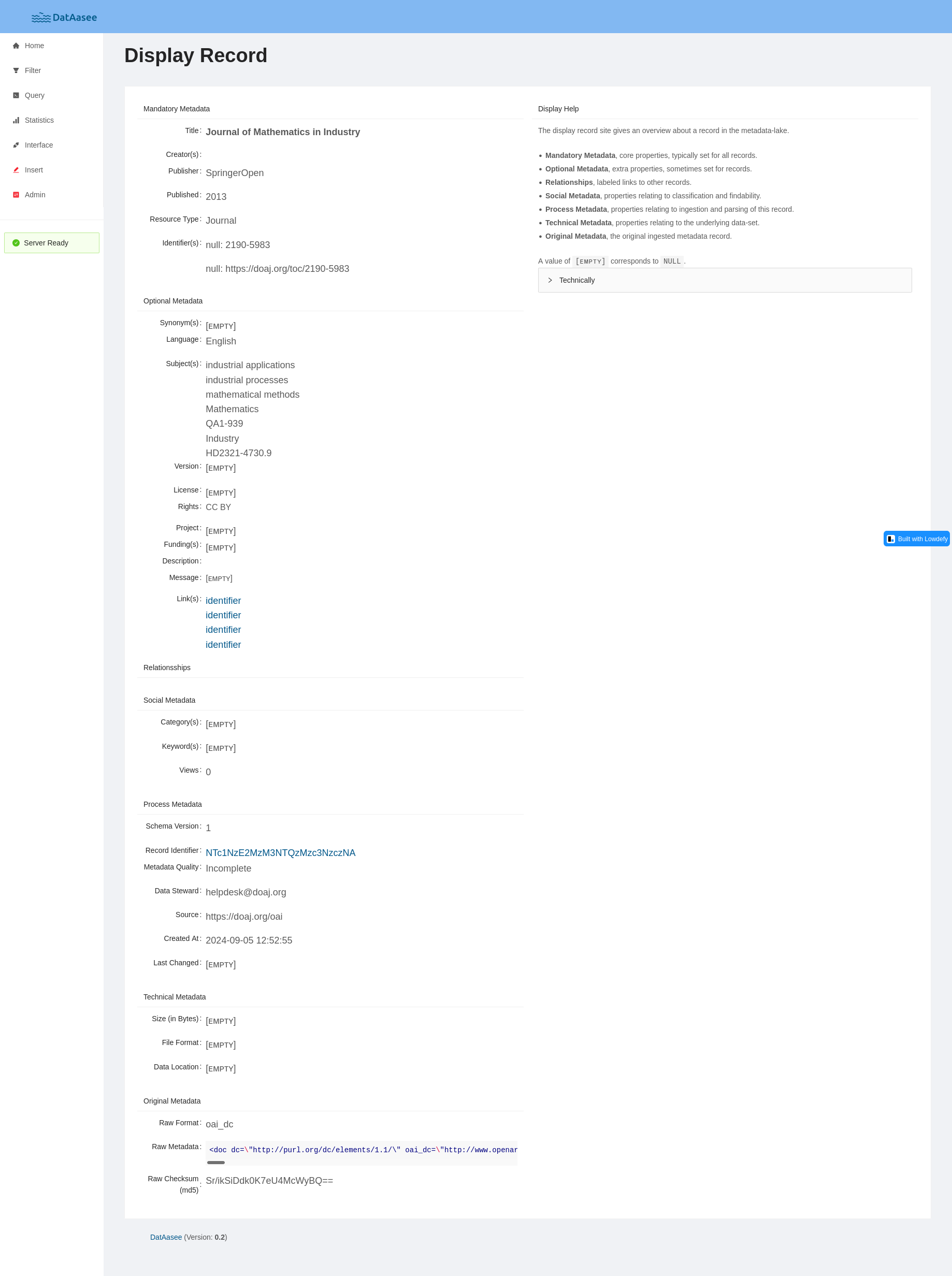

The “Display Record” page presents a single metadata record.

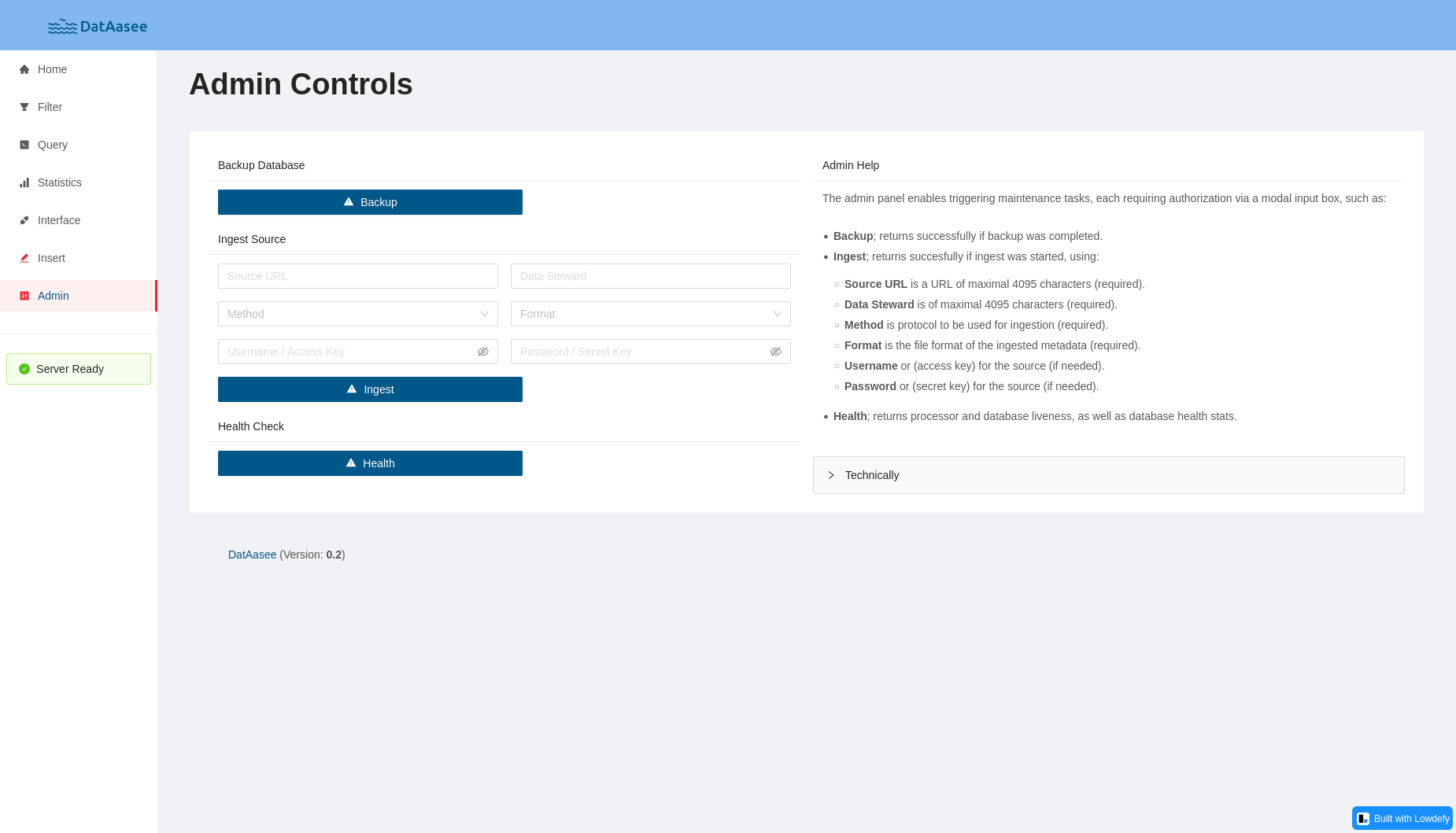

The “Admin Controls” page allows to trigger actions in the backend like ingest source, or health check.

API Indexing

Add the JSON object below to the apis array in your global apis.json:

{

"name": "DatAasee API",

"description": "The DatAasee API enables research data search and discovery via metadata",

"keywords": ["Metadata"],

"attribution": "DatAasee",

"baseURL": "http://your-dataasee.url/api/v1",

"properties": [

{

"type": "InterfaceLicense",

"url": "https://creativecommons.org/licenses/by/4.0/"

},

{

"type": "x-openapi",

"url": "http://your-dataasee.url/api/v1/api"

}

]

}

For api-catalog / FAIRiCat,

add the JSON object below to the linkset array:

{

"anchor": "http://your-dataasee.url/api/v1",

"service-doc": [

{

"href": "http://your-dataasee.url/api/v1/api",

"type": "application/json",

"title": "DatAasee API"

}

]

}

3. References

In this section technical descriptions are summarized.

Overview:

- Runtime Configuration

- HTTP API

- Ingest Protocols

- Ingest Encodings

- Ingest Formats

- Native Schema

- Interrelation Edges

- Native Schema Crosswalk

- Query Languages

Runtime Configuration

The following environment variables affect DatAasee if set before starting:

| Symbol | Value | Meaning |

|---|---|---|

TZ |

Europe/Berlin (Default) |

Timezone of all component servers |

DL_PASS |

password1 (Example) |

DatAasee password (use only command-local!) |

DB_PASS |

password2 (Example) |

Database password (use only command-local!) |

DB_OPTS |

Custom database server options, see: https://docs.arcadedb.com/#arcadedb-settings | |

DL_VERSION |

0.9 (Example) |

Requested DatAasee version |

DL_BACKUP |

$PWD/backup (Default) |

Path to backup folder |

DL_USER |

admin (Default) |

DatAasee admin username |

DL_BASE |

your-dataasee.url (Example) |

Outward DatAasee host name |

DL_SAFE |

false (Default) |

Disables the /database endpoint if true |

DL_PORT |

8343 (Default) |

DatAasee API port |

FE_PORT |

8000 (Default) |

Web Frontend port |

Default Ports

8343DatAasee API8000Web Frontend2480Database API (Development Container Images Only)9999Database JMX (Development Container Images Only)

HTTP API

The HTTP API is served under http://<your-base-url>:port/api/v1 by default (see DL_PORT and DL_BASE),

aimed at human as well as machine clients and consumers, is self-documenting, and provides the following endpoints:

| Method | Endpoint | Type | Summary |

|---|---|---|---|

GET |

/ready |

system | Returns service readiness. |

GET |

/api |

system | Returns API specification and schemas. |

GET |

/schema |

support | Returns database schema. |

GET |

/metadata |

data | Returns metadata record(s). |

GET |

/database |

data | Returns metadata queries. |

POST |

/health |

system | Returns service liveness. |

POST |

/ingest |

system | Triggers async ingest of metadata records from source. |

For more details see also the associated OpenAPI definition. Furthermore, parameters, request and response bodies are specified as JSON-Schemas, which are linked in the respective endpoint entries below.

All GET requests are unchallenged; all POST requests are challenged and handled via “Basic Authentication”,

where the username is admin (by default, or was set via DL_USER), and the password was set via DL_PASS.

A POST request without Authorization HTTP header is answered with status 401 and WWW-Authenticate header,

whereas a POST request with Authorization HTTP header is answered with status 403 for missing or invalid credentials.

All response bodies have content type JSON; thus, if provided, the Accept HTTP header can only be application/json or application/vnd.api+json!

Responses follow the JSON:API format, with the exception of the /api endpoint, which returns JSON files directly.

All error messages, which means 4XX and 5XX HTTP status responses, follow the JSON:API specification as well, see the error response JSON schema.

For the metadata endpoint, the id property in a response’s data element corresponds to the native recordId,

for all other (non-resource) endpoints, the id property is the server’s Unix timestamp.

/ready Endpoint

Returns a boolean answering whether the service is ready.

This endpoint is meant for readiness probes by an orchestrator, monitoring or in a frontend.

- HTTP Method:

GET - Authentication: None

- Request Parameters: None

- Response Body:

response/ready.json - Internal Process: see architecture

NOTE: Internally, the overall readiness consists of the backend server AND database server readiness.

Statuses

Examples

Get service readiness:

$ wget -qO- "http://localhost:8343/api/v1/ready"

/api Endpoint

Returns OpenAPI specification without parameters, or parameter, request and response schemas (for the respective endpoint).

This endpoint documents the HTTP API as a whole as well as parameter, request, and response JSON schemas for all endpoints, and helps navigating the API for humans and machines.

- HTTP Method:

GET - Authentication: None

- Request Parameters:

params/api.jsonparams(optional; if provided, a parameter schema for the endpoint in the parameter value is returned.)request(optional; if provided, a request schema for the endpoint in the parameter value is returned.)response(optional; if provided, a response schema for the endpoint in the parameter value is returned.)

- Response Body:

response/api.json - Internal Process: see architecture

NOTE: In case of a successful request, the response is NOT in the

JSON:APIformat, but the requested OpenAPI or Schema JSON-file directly.

NOTE: At most one parameter can be used per request.

Statuses

- 200 OK

- 404 Not Found

- 406 Not Acceptable

- 413 Payload Too Large

- 414 Request-URI Too Long

- 500 Internal Server Error

Examples

Get OpenAPI definition:

$ wget -qO- "http://localhost:8343/api/v1/api"

Get schema endpoint parameter schema:

$ wget -qO- "http://localhost:8343/api/v1/api?params=schema"

Get ingest endpoint request schema:

$ wget -qO- "http://localhost:8343/api/v1/api?request=ingest"

Get metadata endpoint response schema:

$ wget -qO- "http://localhost:8343/api/v1/api?response=metadata"

/schema Endpoint

Returns the native metadata schema.

This endpoint provides the hierarchy of the data model, labels and descriptions for all properties as well as values and facets for enumerated properties, and is meant for labels, selectors, hints or tooltips in a frontend.

- HTTP Method:

GET - Authentication: None

- Request Parameters:

params/schema.jsonprop(optional; if provided, only selected property is returned.)

- Response Body:

response/schema.json - Internal Process: see architecture

NOTE: Keys prefixed with

@refer to meta information (schemaversion, typecomment, or relations).

NOTE: Values for

propare case-sensitive: for example, useprop=resourceType, notprop=resourcetype.

NOTE: The response is cached and refreshed every 5 minutes.

Statuses

Examples

Get native full metadata schema:

$ wget -qO- "http://localhost:8343/api/v1/schema"

Get native metadata schema’s title property:

$ wget -qO- "http://localhost:8343/api/v1/schema?prop=title"

Get native metadata schema’s enumerated language property:

$ wget -qO- "http://localhost:8343/api/v1/schema?prop=language"

Get native metadata schema’s isRelatedTo relation:

$ wget -qO- "http://localhost:8343/api/v1/schema?prop=@isRelatedTo"

/metadata Endpoint

Fetches, lists, or searches and filters metadata record(s). Three distinct modes of operation are available (in order of precedence):

- If

idis given, a record with thisrecordIdis returned if it exists (arecordIdstarts withni:), and optionally transformed if an exportformatis given; - if

sourceis given, records are filtered by source identifier, which are reported by the/schemaendpoint (the timestamp can be omitted); - if no

idorsourceis given, a combined full-text search viasearch, and filter search fordoi,language,resourcetype,license,category,format,from,tillis performed.

This endpoint’s responses can include pagination where appropriate.

Paging via page is one-based (meaning the first page is 1) for the combined full-text / filter search, as well as sorting via newest;

for source listings paging is cursor-based, where the cursor is passed also via page.

For requests with id at most one result is returned,

for requests with source at most one-hundred results are returned per page,

search/filter requests return at most twenty results per page.

This is the main endpoint serving the metadata data of the DatAasee database similar to a resource.

- HTTP Method:

GET - Authentication: None

- Request Parameters:

params/metadata.jsonid(optional; if provided, a metadata record with thisrecordIdis returned)- together with

id,formatcan bedataciteorbibjson

- together with

source(optional; if provided, metadata records from thissourceare returned)language(optional; if provided, filter results bylanguageare returned)search(optional; if provided, full-text search results for this value are returned)resourcetype(optional; if provided, filter results byresourceTypeare returned)license(optional; if provided, filter results bylicenseare returned)category(optional; if provided, filter results bycategoryare returned)format(optional; if provided, filter results byrawFormatare returned; also used to set export format)doi(optional; if provided, filter results byidentifiers.dataare returned foridentifiers.name = 'doi')from(optional; if provided, filter results greater or equalpublicationYearare returned)till(optional; if provided, filter results less or equalpublicationYearare returned)page(optional; if provided, the n-th page of results is returned)- together with

sourcea cursor (string) is expected for paging, which is returned in the “next” link. - without

sourcean integer (number) between 1 and 50 is expected for paging.

- together with

newest(optional; if provided, results are sorted new-to-old if true, or old-to-new if false, by default, no sorting)

- Response Body:

response/metadata.json - Internal Process: see architecture

NOTE: Export formats are a convenience feature without round-trip guarantees.

NOTE: An explicitly empty

sourceparameter (i.e.,source=) implies all sources.

NOTE: A full-text search always matches for all query terms (AND-based) in titles, synonyms, descriptions and keywords in any order, while accepting

*as wildcards and_to build phrases, for example:I_*_a_dream.

NOTE: A response includes paginated links

first,prev, andnextif applicable.

NOTE: The

typein aBibJSONexport is renamedentrytypedue to a collision with JSON:API rules.

NOTE: The

id=ni:dataaseeis a special record, see Example Record, which can be returned byid, but not searched or filtered.

Statuses

- 200 OK

- 400 Bad Request

- 404 Not Found

- 406 Not Acceptable

- 413 Payload Too Large

- 414 Request-URI Too Long

- 500 Internal Server Error

Examples

Get record by record identifier:

$ wget -qO- "http://localhost:8343/api/v1/metadata?id=ni:dataasee"

Get record(s) by DOI:

$ wget -qO- "http://localhost:8343/api/v1/metadata?doi=10.5281/zenodo.13734194"

Export record in given format:

$ wget -qO- "http://localhost:8343/api/v1/metadata?id=ni:dataasee&format=datacite"

Search records by single filter:

$ wget -qO- "http://localhost:8343/api/v1/metadata?language=chinese"

Search records by multiple filters:

$ wget -qO- "http://localhost:8343/api/v1/metadata?resourcetype=book&language=german"

Search records by full-text for word “History”:

$ wget -qO- "http://localhost:8343/api/v1/metadata?search=History"

Search records by full-text and filter, oldest first:

$ wget -qO- "http://localhost:8343/api/v1/metadata?search=Geschichte&resourcetype=book&language=german&newest=false"

List records from all sources:

$ wget -qO- "http://localhost:8343/api/v1/metadata?source="

/database Endpoint

Returns the results of queries directly against the database.

This endpoint is meant for custom queries and intended for trusted internal

clients only. For public deployments, set DL_SAFE=true or block this endpoint

at a reverse proxy.

- HTTP Method:

GET - Authentication: None

- Request Parameters:

params/database.jsonlanguage(optional; if provided, sets thequerylanguage, can besql,opencypher,mongo,graphql, orredis, by default SQL is assumed.)query(required; the query.)

- Response Body:

response/database.json - Internal Process: see architecture

NOTE: Only idempotent read-only operations are permitted.

WARNING: Queries can potentially cause high loads on the database. If deployed publicly, this endpoint should be blocked via setting the environment variable

DL_SAFEtotrue.

Statuses

Examples

Search records by custom SQL query:

$ wget -qO- "http://localhost:8343/api/v1/database?language=sql&query=SELECT+FROM+Metadata+LIMIT+10"

/health Endpoint

Returns internal status and versions of service components.

This endpoint is meant for liveness checks by an orchestrator, observability, or for manually inspecting the database and processor health and status. In particular the ingest and interconnect status of the processor and database respectively is reported.

- HTTP Method:

POST - Authentication: Basic

- Request Body: None

- Response Body:

response/health.json - Internal Process: see architecture

Statuses

- 200 OK

- 401 Unauthorized

- 403 Invalid Credentials

- 406 Not Acceptable

- 413 Payload Too Large

- 414 Request-URI Too Long

- 500 Internal Server Error

Examples

Get service health:

$ wget -qO- "http://localhost:8343/api/v1/health" --user admin --ask-password --post-data=''

/ingest Endpoint

Triggers an asynchronous ingest of metadata records from a source, followed by an interconnect of records.

An ingest is a two-stage process: First, the backend forwards records from an external source to the database; second the database interconnects all new records based on identified relations.

- HTTP Method:

POST - Authentication: Basic

- Request Body:

request/ingest.jsonsourcemust be an HTTP(S) URLmethodmust be one ofoai-pmh,s3,get,dataasee(from another DatAasee instance)formatmust be one ofdatacite,oai_datacite,dc,oai_dc,lido,marc21,marcxml,mods,rawmods, ordataaseestewardshould be a URL or email address, but can be any description of the source’s data stewardrightsshould be a rights or license identifier, but can be any description of the source’s use restrictionsoptions(optional) ampersand separated selective harvesting options (currently only for OAI-PMH)username(optional) a username or access key for the source (currently only for S3)password(optional) a password or secret key for the source (currently only for S3)

- Response Body:

response/ingest.json - Internal Process: see architecture

NOTE: The request body can be JSON or

application/x-www-form-urlencoded.

NOTE: This is an asynchronous action, so the response just reports if an ingest was started. Completion is noted in the backend logs and the subsequent interconnect in the database logs. The current ingest and interconnect status is also reported by the

/healthendpoint.

NOTE: Only one ingest at a time can happen which is enforced with a backend lock and a database lock. To check if the server is currently ingesting, send an empty body to this endpoint.

NOTE: The

methodandformatproperties are case-sensitive.

NOTE: The

optionsfield follows the selective harvesting in OAI-PMH, For example, incremental harvesting is possible usingfrom=2000-01-01orset=institution&from=2000-01-01

NOTE: Since the record identifier is a hash of metadata properties, records are not duplicated but updated (

UPSERTed) if the hash coincides.

Statuses

- 200 OK

- 202 Accepted

- 400 Bad Request

- 401 Unauthorized

- 403 Invalid Credentials

- 406 Not Acceptable

- 413 Payload Too Large

- 414 Request-URI Too Long

- 503 Service Unavailable

Examples

Check if the server is busy ingesting:

$ wget -qO- "http://localhost:8343/api/v1/ingest" --user admin --ask-password --post-data=''

Start ingest from a given source:

$ wget -qO- "http://localhost:8343/api/v1/ingest" --user admin --ask-password --post-data \

'{"source":"https://datastore.uni-muenster.de/oai2d","method":"oai-pmh","format":"datacite","rights":"CC0","steward":"fdm@uni-muenster"}'

Ingest Protocols

- OAI-PMH (Open Archives Initiative Protocol for Metadata Harvesting)

- Identifier:

oai-pmh - Supported Versions:

2.0 - List available metadata formats via

http://url.to/oai?verb=ListMetadataFormats

- Identifier:

- S3 (Simple Storage Service)

- Identifier:

s3 - Supported Versions:

2006-03-01 - Expects a bucket of files in the same format which is ingested entirely file by file

- Identifier:

- GET (Plain HTTP GET)

- Identifier:

get - Expects a single

.xmlfile - The file’s contents require an XML root-element (of any name).

- Identifier:

- DatAasee

- Identifier:

dataasee - Supported Versions:

0.9 - Ingest all contents from another DatAasee instance, the associated

formatparameter should be set todataasee.

- Identifier:

Ingest Encodings

Currently, XML (eXtensible Markup Language) is the only encoding for

ingested metadata, with the exception of ingesting via the dataasee protocol, which uses JSON.

Ingest Formats

- DataCite

- Identifiers:

datacite,oai_datacite - Supported Versions:

4.5,4.6,4.7 - Format Specification

- Identifiers:

- DC (Dublin Core)

- Identifiers:

dc,oai_dc - Supported Versions:

1.1 - Format Specification

- Identifiers:

- LIDO (Lightweight Information Describing Objects)

- Identifiers:

lido - Supported Versions:

1.0 - Format Specification

- Identifiers:

- MARC (MAchine-Readable Cataloging)

- Identifier:

marc21,marcxml - Supported Versions:

1.1(XML) - Format Specification

- Identifier:

- MODS (Metadata Object Description Schema)

- Identifiers:

mods,rawmods - Supported Versions:

3.7,3.8 - Format Specification

- Identifiers:

Native Schema

The underlying DBMS (ArcadeDB) is a property-graph database of nodes (vertexes) and edges being documents (similar to JSON files). The graph nature is utilized by interconnecting records (vertex documents) via identifiers (i.e., DOI) during ingest, given a set of predefined relations.

Conceptually, the data model for metadata records has five sections:

- Process - documenting information generated and assigned during ingest process

- Technical - information about the underlying data’s appearance

- Social - discoverability information of the metadata record

- Descriptive - information about the underlying data’s content

- Raw - originally ingested metadata

The central type of the metadatalake database is the Metadata vertex type, with the following properties:

| Key | Section | Entry | Internal Type | Constraints | Comment |

|---|---|---|---|---|---|

schemaVersion |

Process | Automatic | Integer | =1 | |

recordId |

Process | Automatic | String | max 47 | base64url-encoded sha256 hash of: source, format, source record identifier (or publisher), publicationYear, title; with prefix "ni:" |

metadataQuality |

Process | Automatic | String | max 255 | Currently one of: "Incomplete", "OK" |

dataSteward |

Process | Automatic | String | max 4095 | |

source |

Process | Automatic | Link(Pair) | sources | |

sourceRights |

Process | Automatic | String | max 4095 | |

createdAt |

Process | Automatic | Datetime | ||

sizeBytes |

Technical | Optional | Integer | min 0 | |

dataFormat |

Technical | Optional | String | max 255 | |

dataLocation |

Technical | Optional | String | max 4095, URL regex | |

categories |

Social | Automatic | List(Link) | max 3 | Pass array of strings to API, returned as array of strings from API |

keywords |

Social | Optional | List(String) | max 15 | Full-text indexed |

title |

Descriptive | Mandatory | String | max 255 | Full-text indexed (Longer titles are truncated, but stored in full in synonyms) |

creators |

Descriptive | Mandatory | List(Pair) | max 255 | Pass array of Pair objects (name:fullname, data:identifier) to API |

publisher |

Descriptive | Mandatory | String | max 255 | |

publicationYear |

Descriptive | Mandatory | Integer | min -9999, max 9999 | |

resourceType |

Descriptive | Mandatory | Link(Pair) | resourceTypes | Pass string to API, returned as string from API |

identifiers |

Descriptive | Mandatory | List(Pair) | max 255 | Pass array of Pair objects (name:identifier, data:type) to API |

synonyms |

Descriptive | Optional | List(Pair) | max 255 | Pass array of Pair objects (name:title, data:type) to API, name full-text indexed |

language |

Descriptive | Optional | Link(Pair) | languages | Pass string to API, returned as string from API |

subjects |

Descriptive | Optional | List(Pair) | max 255 | Pass array of Pair objects (name:name, data:identifier) to API |

version |

Descriptive | Optional | String | max 255 | |

license |

Descriptive | Optional | Link(Pair) | licenses | Pass string to API, returned as string from API |

rights |

Descriptive | Optional | String | max 65535 | |

fundings |

Descriptive | Optional | List(Pair) | max 255 | Pass array of Pair objects (name:funder, data:identifier) to API |

description |

Descriptive | Optional | String | max 65535 | Full-text indexed |

relatedItems |

Descriptive | Optional | List(Pair) | max 255 | Pass array of Pair objects (name:type, data:URL) to API |

rawMetadata |

Raw | Automatic | Link(Raw) | ||

rawFormat |

Raw | Automatic | Link(Pair) | schemas | |

rawChecksum |

Raw | Automatic | String | max 255 | SHA256 hash of rawMetadata |

NOTE: See also the custom queries section and the schema diagram: schema.md.

NOTE: The

recordIdproperty is DatAasee specific identifier and should not be treated as a public web identifier.

NOTE: The properties

related,visitedare only for internal purposes and hence not listed here.

NOTE: The preloaded set of

Categories(see categories.csv) is based on the OECD Fields of Science and Technology.

Global Metadata

The Metadata type has the custom metadata fields:

| Key | Type | Comment |

|---|---|---|

version |

Integer | Internal schema version (to compare against the schemaVersion property) |

comment |

String | Database comment |

Property Metadata

Each schema property has a label, additionally, the descriptive properties

have a comment property, and enumerated properties hold the associated

document type name holding all admissible values in enum.

| Key | Type | Comment |

|---|---|---|

label |

String | For UI labels |

comment |

String | For UI helper texts |

enum |

String |

NOTE: The

/schemaendpoint response provides all admissible values for enumerated properties inenumdirectly not the type name, and additionally afacetslists which is not part of the schema, but view refreshed after ingests.

Pair Documents

A helper document type used for source, creators, identifiers, synonyms, language, subjects, license, fundings, relatedItems link targets or list elements.

| Property | Type | Constraints |

|---|---|---|

name |

String | min 1, max 255 |

data |

String | max 4095, URL regex |

NOTE: The URL regex is based on stephenhay’s pattern.

Raw Documents

A helper document type for rawMetadata.

| Property | Type | Constraints |

|---|---|---|

value |

String |

Interrelation Edges

| Type | Domain | Range | Comment |

|---|---|---|---|

isRelatedTo |

Metadata |

Metadata |

Generic catch-all edge type and base type for all other edge types |

isNewVersionOf |

Metadata |

Metadata |

See DataCite |

isDerivedFrom |

Metadata |

Metadata |

See DataCite |

hasPart |

Metadata |

Metadata |

See DataCite |

isPartOf |

Metadata |

Metadata |

See DataCite |

isDescribedBy |

Metadata |

Metadata |

See DataCite |

commonExpression |

Metadata |

Metadata |

See OpenWEMI |

commonManifestation |

Metadata |

Metadata |

See OpenWEMI |

NOTE: The graph is directed, so the edge names have a direction. By default, the edge name refers to the outbound direction.

Edge Metadata

| Key | Type | Comment |

|---|---|---|

label_in |

String | For UI labels (outbound edge) |

label_out |

String | For UI labels (incoming edge) |

Native Schema Crosswalk

| DatAasee | DataCite | DC | LIDO | MARC | MODS |

|---|---|---|---|---|---|

title |

titles.title |

title |

descriptiveMetadata.objectIdentificationWrap.titleWrap.titleSet |

245, 130 |

titleInfo, part |

creators |

creators.creator |

creator |

descriptiveMetadata.eventWrap.eventSet |

100, 700 |

name, relatedItem |

publisher |

publisher |

publisher |

descriptiveMetadata.objectIdentificationWrap.repositoryWrap.repositorySet |

260, 264 |

originInfo |

publicationYear |

publicationYear |

date |

descriptiveMetadata.eventWrap.eventSet |

260, 264 |

originInfo, part |

resourceType |

resourceType |

type |

category |

007, 337 |

genre, typeOfResource |

identifiers |

identifier, alternateIdentifiers.alternateIdentifier |

identifier |

lidoRecID, objectPublishedID |

001, 003, 020, 024, 856 |

identifier, recordInfo |

synonyms |

titles.title |

title |

descriptiveMetadata.objectIdentificationWrap.titleWrap.titleSet |

210, 222, 240, 242, 243, 246, 247 |

titleInfo |

language |

language |

language |

descriptiveMetadata.objectClassificationWrap.classificationWrap.classification |

008, 041 |

language |

subjects |

subjects.subject |

subject |

descriptiveMetadata.objectRelationWrap.subjectWrap.subjectSet, descriptiveMetadata.objectClassificationWrap.classificationWrap.classification |

655, 689 |

subject, classification |

version |

version |

descriptiveMetadata.objectIdentificationWrap.displayStateEditionWrap.displayEdition |

250 |

originInfo |

|

license |

rightsList.rights |

administrativeMetadata.rightsWorkWrap.rightsWorkSet |

506, 540 |

accessCondition |

|

rights |

rightsList.rights |

rights |

administrativeMetadata.rightsWorkWrap.rightsWorkSet |

506, 540 |

accessCondition |

fundings |

fundingReferences.fundingReference |

||||

description |

descriptions.description |

description |

descriptiveMetadata.objectIdentificationWrap.objectDescriptionWrap.objectDescriptionSet |

500, 520 |

abstract |

relatedItems |

relatedIdentifiers.relatedIdentifier |

related |

descriptiveMetadata.objectRelationWrap.relatedWorksWrap.relatedWorkSet |

856 |

relatedItem |

keywords |

subjects.subject |

subject |

descriptiveMetadata.objectIdentificationWrap.objectDescriptionWrap.objectDescriptionSet |

653 |

subject, classification |

dataLocation |

identifier |

856 |

location |

||

dataFormat |

formats.format |

format |

|||

sizeBytes |

|||||

isRelatedTo |

relatedItems.relatedItem, relatedIdentifiers.relatedIdentifier |

related |

descriptiveMetadata.objectRelationWrap.relatedWorksWrap.relatedWorkSet |

780, 785, 786, 787 |

relatedItem |

isNewVersionOf |

relatedItems.relatedItem, relatedIdentifiers.relatedIdentifier |

relatedItem |

|||

isDerivedFrom |

relatedItems.relatedItem, relatedIdentifiers.relatedIdentifier |

relatedItem |

|||

isPartOf |

relatedItems.relatedItem, relatedIdentifiers.relatedIdentifier |

773 |

relatedItem |

||

hasPart |

relatedItems.relatedItem, relatedIdentifiers.relatedIdentifier |

relatedItem |

|||

isDescribedBy |

|||||

commonExpression |

relatedItem |

||||

commonManifestation |

identifier, alternateIdentifiers.alternateIdentifier |

identifier |

lidoRecID, objectPublishedID |

001, 003, 020, 024, 856 |

identifier, recordInfo |

Query Languages

| Language | Identifier | Mini Tutorial | Documentation |

|---|---|---|---|

| SQL | sql |

here | ArcadeDB SQL |

| Cypher | opencypher |

here | OpenCypher |

| MQL | mongo |

here | Mongo MQL |

| GraphQL | graphql |

here | GraphQL Spec |

| Redis | redis |

here | Redis Commands |

4. Tutorials

In this section lessons for newcomers are given.

Overview:

- Getting Started

- Example Ingest

- Example Harvest

- Secret Management

- Container Engines

- Container Probes

- Custom Queries

Getting Started

- Setup compatible compose orchestrator

-

Download DatAasee release:

$ wget "https://raw.githubusercontent.com/ulbmuenster/dataasee/0.9/compose.yaml"or:

$ curl -O "https://raw.githubusercontent.com/ulbmuenster/dataasee/0.9/compose.yaml" -

Create or mount a folder for backups (assuming the backup volume is mounted under

/backupon the host in case of mount)$ mkdir -p backupor:

$ ln -s /backup backup -

Ensure the backup location has the necessary permissions:

$ chmod o+w backup # For testingor:

$ sudo chown root backup # For deploying -

Start the DatAasee service:

$ ␣DL_PASS=password1 DB_PASS=password2 docker compose up -dor:

$ ␣DL_PASS=password1 DB_PASS=password2 podman compose up -d

Now, if started locally, point a browser to http://localhost:8000 to use the web frontend,

or send requests to http://localhost:8343/api/v1/ to use the HTTP API directly, for example via wget, curl.

Example Ingest

For demonstration purposes the collection of the “Directory of Open Access Journals” (DOAJ) is ingested. An ingest has four steps: First, the operator needs to collect the necessary information of the metadata source, i.e. URL, protocol, format, and data steward. Second, the ingest is triggered via the HTTP API. Third, the backend ingests the metadata records from the source to the database. Fourth and last, the ingested data is interconnected inside the database.

-

Check the “Directory of Open Access Journals” (in a browser) for a compatible ingest method:

https://doaj.orgThe

oai-pmhprotocol is available. -

Check the documentation about OAI-PMH for the corresponding endpoint:

https://doaj.org/docs/oai-pmh/The OAI-PMH endpoint URL is:

https://doaj.org/oai. -

Check the OAI-PMH endpoint for available metadata formats (for example, in a browser):

https://doaj.org/oai?verb=ListMetadataFormatsA compatible metadata format is

oai_dc. -

Trigger the ingest:

$ wget -qO- "http://localhost:8343/api/v1/ingest" --user admin --ask-password --post-data \ '{"source":"https://doaj.org/oai", "method":"oai-pmh", "format":"oai_dc", "rights":"CC0", "steward":"helpdesk@doaj.org"}'HTTP status

202confirms the start of the ingest. There is no steward listed in the DOAJ documentation, thus a general contact is set. Alternatively, the “Ingest” form of the “Admin” page in the web frontend can be used. -

DatAasee reports the start of the ingest in the backend logs:

$ docker logs dataasee-backend-1with a message such as:

Ingest started from https://doaj.org/oai via oai-pmh as oai_dc.. -

DatAasee reports completion of the ingest in the backend logs:

$ docker logs dataasee-backend-1with a message such as:

Ingest completed from https://doaj.org/oai of 21319 records (of which 0 failed) after 0.1h.. -

DatAasee starts interconnecting the ingested metadata records:

$ docker logs dataasee-database-1with the message:

Interconnect Started!. -

DatAasee finishes interconnecting the ingested metadata records:

$ docker logs dataasee-database-1with the message:

Interconnect Completed!.

NOTE: The interconnect is an asynchronous operation, whose status is reported in the database logs or via the

/healthendpoint.

NOTE: Generally, the ingest methods

OAI-PMHfor suitable sources,S3for multi-file sources, andGETfor single-file sources should be used.

Example Harvest

A typical use-case for DatAasee is to forward all metadata records from a specific source. To demonstrate this, the previous Example Ingest is assumed to have happened.

-

Check the ingested sources

$ wget -qO- "http://localhost:8343/api/v1/schema?prop=source" -

Request the first set of metadata records from source

https://doaj.org/oai(the source needs to be URL encoded):$ wget -qO- "http://localhost:8343/api/v1/metadata?source=https%3A%2F%2Fdoaj.org%2Foai"At most 100 records are returned.

-

Request the next set of metadata records (A

nextlink is given in themetaobject of the previous response):$ wget -qO- "http://localhost:8343/api/v1/metadata?source=https%3A%2F%2Fdoaj.org%2Foai&page=IzE3OjQxMTY"The last page can contain less than 100 records, all pages before contain 100 records.

NOTE: Using the

sourcefilter, the full record is returned, instead of a search result when used without, see/metadata

NOTE: No stable ordering of returned records is guaranteed.

Secret Management

Two secrets need to be managed for DatAasee, the database root password and the backend admin password. To protect these secrets on a host running docker(-compose), for example, the following tools can be used:

sops

$ gpg --quick-generate-key --batch --passphrase '' sops # For testing

$ export SOPS_PGP_FP=$(gpg --with-colons --fingerprint sops | grep '^fpr:' | cut -d':' -f10 | head -n1)

$ printf "DL_PASS=password1\nDB_PASS=password2" > secrets.env

$ sops encrypt -i secrets.env

$ sops exec-env secrets.env 'docker compose up -d'

consul & envconsul

$ consul agent -dev # For testing

$ consul kv put dataasee/DL_PASS password1

$ consul kv put dataasee/DB_PASS password2

$ envconsul -prefix dataasee docker compose up -d

env-vault

$ EDITOR=nano env-vault create secrets.env

- Enter a password protecting the secrets,

- in the editor (here

nano), enter the secrets line-by-line, for example:DL_PASS=password1,DB_PASS=password2; - save and exit the editor.

$ env-vault secrets.env docker compose -- up -d

openssl

$ printf "DL_PASS=password1\nDB_PASS=password2" | openssl aes-256-cbc -e -a -salt -pbkdf2 -in - -out secrets.enc

$ (openssl aes-256-cbc -d -a -pbkdf2 -in secrets.enc -out secrets.env; docker compose --env-file .env --env-file secrets.env up -d; rm secrets.env)

Container Engines

DatAasee is deployed via a compose.yaml (see How to deploy),

which is compatible with the following container and orchestration tools:

- Docker / Podman via

docker compose - Kubernetes / Minikube via

kompose

Docker Compose (Docker)

- docker

- docker compose >= 2.37

Installation see: docs.docker.com/compose/install/

$ ␣DB_PASS=password1 DL_PASS=password2 docker compose up -d

$ docker compose ps

$ docker compose down

Docker Compose (Podman)

- podman

- docker compose >= 2.37

Installation see: podman-desktop.io/docs/compose/setting-up-compose

NOTE: See also the

podman composemanpage.

NOTE: Alternatively the package

podman-docker(on Ubuntu) can be used to emulate docker through podman.

NOTE: The compose implementation

podman-composeis not compatible at the moment.

$ ␣DB_PASS=password1 DL_PASS=password2 podman compose up -d

$ podman compose ps

$ podman compose down

Kompose (Minikube)

- minikube

- kubectl

- kompose

Installation see: kompose.io/installation/

Rename the compose.yaml to compose.txt (so kubectl ignores it) and run:

$ kompose -f compose.txt convert --secrets-as-files

$ minikube start

$ kubectl create secret generic datalake --from-literal=datalake=password1

$ kubectl create secret generic database --from-literal=database=password2

$ kubectl apply -f .

$ kubectl get pods

$ kubectl port-forward service/backend 8343:8343 # now the backend can be accessed via `http://localhost:8343/api/v1`

$ kubectl port-forward service/frontend 8000:8000 # now the frontend can be accessed via `http://localhost:8000`

$ minikube stop

Container Probes

The following endpoints are available for monitoring the respective containers;

here the compose.yaml host names (service names) are used.

Logs are written to the standard output.

Backend

Ready:

http://backend:4195/ready

returns HTTP status 200 if ready, see also Connect /ready.

Liveness:

http://backend:4195/ping

returns HTTP status 200 if live, see also Connect /ping.

Database

Ready:

http://database:2480/api/v1/ready

returns HTTP status 204 if ready, see also ArcadeDB /ready.

Liveness:

http://database:2480/api/v1/exists/metadatalake

returns HTTP status 200 if live, see also ArcadeDB /exists.

NOTE: This endpoint needs database credentials.

Frontend

Ready:

http://frontend:8000

returns HTTP status 200 if ready.

Custom Queries

Custom queries are meant for downstream services to customize recurring data access.

A usage example for a custom query is to get a subset of metadata records or their contents for which filters are too generic;

a practical example is the statistics query in the prototype frontend.

Overall, the DatAasee database schema is based around the Metadata vertex type,

of which its properties correspond to a star schema in relational terms.

See the schema reference as well as the schema overview for the data model.

NOTE: All custom query results are limited to 100 items per request; use a paging mechanism if needed.

NOTE: A good learning resource for SQL, Cypher, and MQL is “SQL and NoSQL Databases”.

SQL

DatAasee uses the ArcadeDB SQL dialect (via language sql).

For custom SQL queries, only single, read-only queries are admissible,

meaning:

The vertex type (cf. table) holding the metadata records is named Metadata.

Examples:

Get the schema:

SELECT FROM schema:types

Get (at most) the first one-hundred metadata record titles:

SELECT title FROM Metadata

OpenCypher

DatAasee supports a subset of OpenCypher (via language opencypher).

For custom Cypher queries, only read-queries are admissible, meaning:

MATCHOPTIONAL MATCHRETURN

Examples:

Get labels:

MATCH (n) RETURN DISTINCT labels(n)

Get one-hundred metadata record titles:

MATCH (m:Metadata) RETURN m

MQL

DatAasee supports a subset of a MQL (via language mongo) as JSON queries.

Examples:

Get (at most) the first one-hundred metadata record titles:

{ "collection": "Metadata", "query": { } }

GraphQL

DatAasee supports a subset of GraphQL (via language graphql).

GraphQL use requires some prior setup:

-

A corresponding GraphQL type for the native

Metadatatype needs to be defined:type Metadata { recordId: ID! } -

Some GraphQL query needs to be defined, for example named

getMetadata:type Query { getMetadata(recordId: ID!): [Metadata!]! }

Since GraphQL type and query declarations are ephemeral, declarations and query execution should be sent together.

Examples

Get (at most) the first one-hundred metadata record titles:

type Metadata { recordId: ID! }

type Query { getMetadata(recordId: ID!): [Metadata!]! }

{ getMetadata }

Redis

DatAasee supports a subset of Redis commands (via language redis)

Examples

Get record with a recordId:

HGET Metadata[recordId] "ni:6g8aa2ARLuJ-8L1Uhjnf-dBN2Q-X1pC0Iqfuw7_yKec"

5. Appendix

In this section development-related guidelines are gathered.

Overview:

- Support Matrix

- Reference Links

- Dependency Docs

- Development Decision Rationales

- Example Record

- Development Workflows

Support Matrix

| Component | Status | Intention |

|---|---|---|

| Database Schema | Supported | Pilot, breaking changes possible until 1.1 |

| Backend API | Supported | Pilot, breaking changes possible until 1.1 |

| Compose Deployment | Reference | Pilot and evaluation |

| Frontend Container | Prototype | Testing and template |

Reference Links

DatAasee: A Metadata-Lake as Metadata Catalog for a Virtual Data-Lake- The Rise of the Metadata-Lake

- Implementing the Metadata Lake

- ELT is dead, and EtLT will be the end of modern data processing architecture

- Dataspace

Dependency Docs

- Docker Compose Docs

- ArcadeDB Docs

- Benthos Docs (via Redpanda Connect)

- Lowdefy Docs

- GNU Make Docs

Development Decision Rationales

User Privacy

- What user data is collected (cf. GDPR)?

- No personal data is collected by DatAasee. DatAasee also does not have user accounts.

Infrastructure FAQ

- What versioning scheme is used?

- DatAasee uses SimVer versioning, with the addition, that the minor

version starts with one for the first release of a major version (

X.1), so during the development of a major version the minor version will be zero (X.0). Version1.1will be the first stable, production-ready release!

- DatAasee uses SimVer versioning, with the addition, that the minor

version starts with one for the first release of a major version (

- How stable is the upgrade to a new release?

- During the development releases (

0.X) every release will likely be breaking, particularly with respect to backend API and database schema. Once a version1.1is released, breaking changes will only occur between major versions.

- During the development releases (

- What are the four

composefiles for?- The

compose.develop.yamlis only for the development environment (dev-only), - The

compose.package.yamlis only for building the release container images (dev-only), - The

compose.yamlis the sole file representing a release (!), - The

compose.proxy.yamlis an extension to thecompose.yamladding a side-car proxy.

- The

- Why does a release consist only of the

compose.yaml?- The compose configuration acts as an installation script and deploy recipe. All containers are set up on-the-fly by pulling. No other files are needed.

- Why is Ubuntu 26.04 used as base image for database and backend?

- Overall, the calendar based version together with the 5 year support policy for Ubuntu LTS

makes keeping current easier. Generally,

glibcis used, and specifically for the database, OpenJDK is supported, as opposed to Alpine.

- Overall, the calendar based version together with the 5 year support policy for Ubuntu LTS

makes keeping current easier. Generally,

- Why does DatAasee delegate security (i.e.,

httpnothttps,basic authnotdigest, no rate limiter)?- DatAasee is a backend service supposed to run behind a proxy or API gateway, which provides

https(thenbasic authis not too problematic) as well as a rate limiter. In case such infrastructure is not provided, as a starting pointcompose.proxy.yaml, viaDB_PASS=password DL_PASS=password docker compose -f compose.yaml -f compose.proxy.yaml up -d, illustrates how a minimal proxy can be set up viacaddy.

- DatAasee is a backend service supposed to run behind a proxy or API gateway, which provides

- Why does the testing setup require

busyboxandwget, isn’twgetpart ofbusybox?busyboxis used for its onboard HTTP server; and while awgetis part ofbusybox, this is a slimmed down variant, specifically the flags--content-on-error,--post-data, and--post-fileare not supported.

- Why do (ingest) tests say the (busybox)

httpdwas not found even thoughbusyboxis installed?- In some distributions an extra package (i.e.

busybox-extrasin Alpine) needs to be installed.

- In some distributions an extra package (i.e.

- Why are there permission conflicts when using

dockerandpodmanwith the same volume?- Each container engine manages this mount internally, meaning a

docker volumeorpodman volumeis created and attached to the localbackupfolder. To switch engines runmake emptywhich will delete all data stored inbackupand the associated volume.

- Each container engine manages this mount internally, meaning a

- Why is the database container crashing?

- If it happens, this is likely due to running out-of-memory; check the size of the database, for example, via the logs if restored from a backup and ensure the database container has sufficient memory available. At best more than the database size.

- What to expect and what to do in case of a crash?

- Restart the complete service. If a crash occurs during an ingest, the already ingested records are lost, since a backup is only performed after a complete ingest (including the subsequent interconnect).

- Why does the

/apiendpoint not respond with JSON:API format?- This is for practical reasons: The payload is expected as JSON and known format (OpenAPI or JSON-Schema), thus wrapping these JSON payloads in JSON:API would add unnecessary complexity.

Data Model FAQ

- What is the data model based on?

- The descriptive metadata in the native data model largely corresponds to the DataCite metadata schema. The remaining properties (process, technical, social, raw) are typical data-lake or data-warehouse metadata attributes.

- Why are

Pairs used?- The

Pairdocument type is a standardized universal product type for a label (name) and detail (data), with the extra constraint that if the detail starts withhttp://orhttps://then it must be a valid URL. This helper type is used whenever an identifier (or URL) with a human-readable name is stored.

- The

- How is the record identifier (

recordId) created?- The record id is a hash of metadata source, format, source record identifier or publisher,

publication year and title. See

process.yamlfor details. This means a recurring ingest from a source where for example a typo in a record’s title is fixed creates a new record. The old one remains as a tombstone and is related to the new one during the interconnect.

- The record id is a hash of metadata source, format, source record identifier or publisher,

publication year and title. See

- What happens when a source record misses mandatory properties?

- Missing or invalid properties in a source record result in an explicit

nullvalue for mandatory properties. ThemetadataQualityproperty will then noteIncomplete.

- Missing or invalid properties in a source record result in an explicit

Database FAQ

- How to fix the database if a

/healthreport has issues?- First of all, this should be a rare occurrence, if not please report an

issue. A fix can be attempted by starting

a shell in the database container, see Database Console, and run the

commands:

CHECK DATABASE FIXandREBUILD INDEX *. Infos on ArcadeDB’s console can be found in the ArcadeDB Docs.

- First of all, this should be a rare occurrence, if not please report an

issue. A fix can be attempted by starting

a shell in the database container, see Database Console, and run the

commands:

- How are enumerated properties filled?

- Enumerated types, and also suggestions for free text fields, are stored in CSV files in the

preloadsub-folder. These files contain at least one column with the label (first line) “name” and optionally a second column with the label “data”.

- Enumerated types, and also suggestions for free text fields, are stored in CSV files in the

- Why is the database using the internal storage and no volume for the database?

- First, a storage volume mounted into the container, could itself be mounted on the host, and assuming it could be a remote (or just slow) resource, this would severely impact database performance.

- When and how should backups be made?

- Automatic backups are made after a successful ingest as well as after a successful interconnect. Outside ingest and interconnect no data is altered so no additional backups are needed.

- How can a backup be made to an S3 bucket?

- Currently, the best option is to mount a bucket on the host machine via

s3fsand forward this mount as backup location for the database service in the Compose file. Alternatively, a Docker plugin likerexray/s3fscould be used to create an S3 Docker volume.

- Currently, the best option is to mount a bucket on the host machine via

- Why does deleting backups on the host require super-user privileges?

- The user running the database inside the container has a user id and group id mismatching

the user running the container service via Compose on the host causing a mismatch leading

to requiring privileges. This has a safety facet: backups cannot accidentally be deleted,

in case backups need to be deleted this can be done from inside the database container,

e.g.

docker compose run --rm database rm -rf /backup/metadatalake/*.

- The user running the database inside the container has a user id and group id mismatching

the user running the container service via Compose on the host causing a mismatch leading

to requiring privileges. This has a safety facet: backups cannot accidentally be deleted,

in case backups need to be deleted this can be done from inside the database container,

e.g.

- What are the internal properties

related,visitedin the schema for?- Both are used only for the interconnect process:

relatedis a map of list of related identifiers created during normalization;visitedlogs if an interconnect process connected this record to the graph already.

- Both are used only for the interconnect process:

Backend FAQ

- Why are the main processing components part of the input and not a separate pipeline?

- Since the ingests may take a long time, it is only triggered and the successful triggering is

reported in the response while the ingest keeps on running. This async behavior is only

possible with a

bufferwhich has to be directly after the input and aftersync_responseof the trigger, therefore the input post-processing processors are used as main pipeline.

- Since the ingests may take a long time, it is only triggered and the successful triggering is

reported in the response while the ingest keeps on running. This async behavior is only

possible with a

- Why is the content type

application/jsonused for responses and notapplication/vnd.api+json?- Using the official JSON MIME-type makes a response more compatible and states what it is in

more general terms. Requested content types on the other hand may be either empty,

*/*,application/json, orapplication/vnd.api+json.

- Using the official JSON MIME-type makes a response more compatible and states what it is in

more general terms. Requested content types on the other hand may be either empty,

- Why are there limits for requests and their bodies and what are they?

- This is an additional defense against exhaustion attacks. A parsed request header together with its URL may not exceed 8192 Bytes, similarly the request body may not exceed 12288 Bytes.

- How could a rate limiter be added directly?

- A

localrate limit resource can be added to thehttp_serverinput component, see https://docs.redpanda.com/redpanda-connect/components/rate_limits/about/ .

- A

- Why are namespaces not preserved in the raw metadata?

- This is an issue in a Go library used by

Connect; this is reported, see: https://github.com/redpanda-data/connect/issues/3928 .

- This is an issue in a Go library used by

Frontend FAQ

- Why is the frontend a prototype?

- The frontend is not meant for production use but serves as system testing device, a proof-of-concept, living documentation, and simplification for manual testing. Thus it has the layout of an internal tool. Nonetheless, it can be used as a basis or template for a production frontend.

- Why is there custom JS defined?

- This is necessary to enable triggering the submit button when pressing the “Enter” key.

- Why does the frontend container use the

backendname explicitly and not the host loopback, e.g.extra_hosts: [host.docker.internal:host-gateway]?- Because

podmandoes not seem to support it (yet).

- Because

- Why is there an

index.htmlin addition to the included frontend?- The static

index.htmlis supposed to be opened locally in your browser and is not served. It serves two purposes: First, lightweight manual testing, and second as a plain vanilla implementation example (all in one file in about 400 lines). Note, that on theindex.htmlsite, the API base address can be set, and hence also non-local DatAasee instances can be tested or used.

- The static

- How can the frontend be removed?

- Remove the YAML object

"frontend"in thecompose.yaml(all lines below## Frontend # ...).

- Remove the YAML object

Example Record

Following is a minimal test record stored in the processor (not the database),

accessible via the special record identifier (aka recordId): ni:dataasee.

{

"createdAt": "2026-04-07 13:16:08",

"creators": [

{

"name": "C. Himpe"

}

],

"dataSteward": "dataasee",

"identifiers": [

{

"data": "doi",

"name": "10.5281/zenodo.13734194"

}

],

"metadataQuality": null,

"publicationYear": null,

"publisher": "ULB Münster",

"rawChecksum": null,

"rawFormat": "dataasee",

"recordId": "ni:dataasee",

"relatedItems": [

{

"data": "https://github.com/ulbmuenster/dataasee",

"name": "repository"

}

],

"resourceType": "Software",

"schemaVersion": 1,

"source": "dataasee",

"title": "DatAasee",

"version": "0.9"

}

Development Workflows

Development Setup

git clone https://github.com/ulbmuenster/dataasee && cd dataasee(clone repository)make setup(builds container images locally)make start(starts development setup)

Testing

make test(automatic testing, requires running development setup)- Dev Frontend (manual testing)

- Prototype Frontend (manual testing)

Release Builds

make build- The environment variable

REGISTRYsets the registry of the container images (default islocalhost.localhost) - The repository name is

dataaseeand the image name is the service name (database,backend,frontend) - The environment variable

DL_VERSIONsets the tag of the container image (by default read from.env) - Altogether this gives

$REGISTRY/dataasee/{database,backend,frontend}:$DL_VERSION

Compose Setup

make xxx(usesdocker compose)make xxx COMPOSE="docker compose"(usesdocker compose)make xxx COMPOSE="podman compose"(usespodman compose)

Dependency Updates

- Dependency listing

- Dependency versions

- Version verification (Frontend only)

Schema Changes

API Changes

Dev Monitoring

- Use

lazydocker(select tabs via[and])

Coding Standards

- YAML and SQL files must have a comment header line containing: dialect, project, license, author.

- YAML should be restricted to StrictYAML (except

.gitlab-ci.yml). - SQL statements should be all-caps.

Release Management

- Each release is marked by a tag.

- The latest section of the CHANGELOG becomes its description.

- For each tag, a branch named after the version is created.